4 min read

The Layered Databus: Foundation of a Modern Distributed Architecture

John Patchin and Patrick Keliher

:

September 29, 2020

John Patchin and Patrick Keliher

:

September 29, 2020

It’s a data centric world out there.

Today’s applications run on data – lots of data. A continuous flow of high-volume data streams across networks, across different transport protocols and across geographically-distributed devices to ensure applications run as designed. These connected systems often require capabilities such as fault-tolerance, scalability, low-latency and sub-system isolation – and of course, interoperability so that data gets to where it needs to go, when it needs to get there.

One popular software protocol that bridges from legacy to modern systems is the data-centric databus, which is built on the Data Distribution Service™ (DDS) standard. DDS provides the communications layer via a centralized databus that enables data in motion to flow where required. It’s a highly scalable, highly-efficient architecture and is recommended by the Industrial Internet Consortium® in its Industrial Internet Reference Architecture (IIRA)1 architectural guideline document.

Unlike a database, which handles data at rest, the DDS databus handles data in motion. You can think of it as a shared global space where data is continuously flowing between publishers and their matched subscribers, scalable to hundreds, thousands or more endpoints. The databus has been used in thousands of systems to solve increasingly complex design integration challenges without the need for custom coding. Engineers can create multiple DDS-based layers (databuses) to separate, isolate and selectively share communications. A layered databus architecture can do this with an impressive and flexible set of mechanisms, notably Quality of Service (QoS), filtering, automatic discovery and security.

Though leveraging multiple databuses in a system enables many powerful capabilities, it also introduces some challenges, such as when information needs to be shared across those databuses. This is where the layered databus comes into play. A layered databus architecture creates a virtual plug-and-play communications platform to modernize and expand legacy applications and systems. It eliminates the need to recompile, rebuild, revalidate and reset proprietary monolithic systems when a new subsystem needs to connect.

Layered DDS databuses solve complex problems of expansion and scalability without sacrificing the granularity and control that complex, interconnected designs require. The layered databus architecture makes it comparatively easy to enable proprietary systems to interoperate with new systems. Layers are transparent and operate automatically: They separate and share based on the needs of the domain publishers and subscribers.

How a Layered Databus Works

In industrial systems, one common architecture pattern is made up of multiple databuses layered by communication QoS and data model needs. Typically, databuses will be implemented at the edge of a system, which often represents data collected from sensors or devices used in running smart machines or lower-level subsystems as part of a complex system (e.g., a car, an oil rig or a hospital).

On another hierarchy level, there can be one or more databuses that integrate additional machines or systems, facilitating data communications between and within the higher-level control center or backend systems. The backend or control center layer could be the highest layer databus in the system, but there can certainly be more than these three layers.

Advantages of the Layered Databus

A layered databus can greatly simplify potential complexity. Specifically, engineers are often looking for a way to achieve scalability in order to support hundreds of thousands of devices and applications without additional complexity. A layered databus provides the framework to simplify data exchange at scale. It can support hundreds of thousands of devices and applications. Other benefits of the layered databus include:

- Fast device-to-device integration with delivery times in milliseconds or microseconds

- Automatic data and application discovery with and between databuses

- Scalable integration comprising hundreds of thousands of domain participants

- Natural redundancy to allow extreme availability and resilience

- Hierarchical subsystem isolation enabling the development of complex systems design

- Robust security that is customizable via any standard API and can run over any type of transport

Another major advantage of layered databus architecture is its ability to integrate greenfield systems with legacy design practices. The layered databus answers the question of how to create a communications platform that enables legacy systems to scale to meet the proliferating demands of the modern industrial network.

Layered Databus Architecture in Action

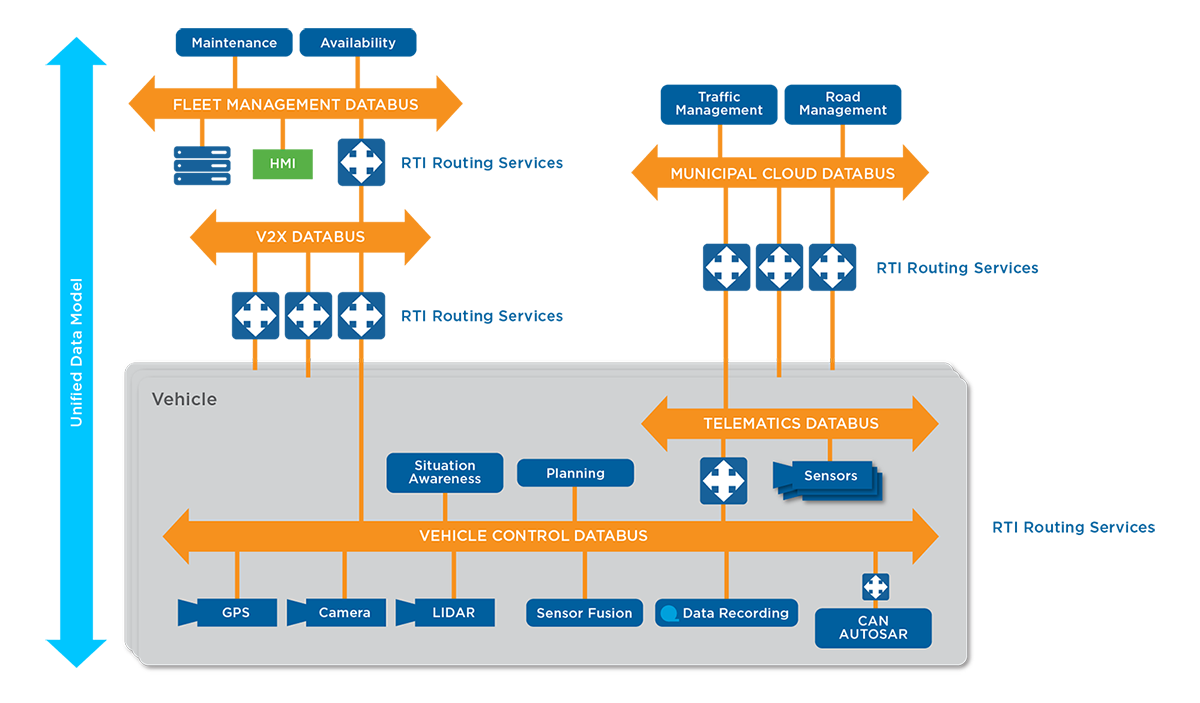

Modern transportation architectures have a mix of autonomous and non-autonomous elements. The layered databus cleverly bridges these disparate elements. There can be other layers for vehicle-to-vehicle communications, fleet management communications and much more.

Figure 1: Sample layered databus architecture used in an autonomous vehicle scenario.

In this example, Layer 1 can be utilized as the communications platform between the lower bandwidth legacy systems and the higher bandwidth sensors required to provide autonomous capabilities.

Layer 2 may also be within the vehicle. In this example, Layer 2 supports telematics. Note that there is a gateway (RTI Routing Service) between the two layers. One of the important roles of the gateway is to isolate critical safety-related data flowing across Layer 1 from non-critical telematics in Layer 2. The design goal is that any failure in telematics will not affect the safe operation of the vehicle. The DDS databus includes built-in security to protect the system against unauthorized traffic.

Layer 3 provides the communications platform for the municipal cloud. These cloud-based services provide critical communications with Layer 2. This constant flow of two-way communications provides vital information on road conditions, weather patterns, accidents and detours, etc.

With the unified data model, engineering teams can use a consistent data model from the smallest sensor in the car up to the cloud. In contrast, proprietary implementations may have different teams working on different protocols and interfaces. The unified data model not only eliminates this resource drain, it also means that if there are changes to the platform, protocols or architecture, it will not mean redesigning applications.

Learn How RTI Can Help

To learn more about the layered databus, listen to the on demand Tech Talk, download the white paper, or talk to your RTI representative.

1 Industrial Internet Consortium (later changed to Industry IoT Consortium) https://www.iiconsortium.org/IICF.htm

About the authors

.jpg?width=200&name=Preffered%20(1).jpg) Patrick “PK” Keliher is a Regional Field Application Engineering Manager for Real-Time Innovations. He has over 30 years of experience in networking and embedded software as a Software Engineer and Field Applications Engineer. Before coming to RTI his past roles included simulation technology, Bluetooth®, wireless networking, real-time operating systems, and aerospace and defense software development. PK received his double BS in Computer Science and Electrical Engineering from Washington University in St. Louis.

Patrick “PK” Keliher is a Regional Field Application Engineering Manager for Real-Time Innovations. He has over 30 years of experience in networking and embedded software as a Software Engineer and Field Applications Engineer. Before coming to RTI his past roles included simulation technology, Bluetooth®, wireless networking, real-time operating systems, and aerospace and defense software development. PK received his double BS in Computer Science and Electrical Engineering from Washington University in St. Louis.

John Patchin is a Senior Applications Engineer for Real-Time Innovations (RTI). He received his BS and MS in Mechanical Engineering from the University of Kansas. John has over 25 years of experience in industrial automation, embedded software development and networking. Prior to RTI, he developed machine tool and robot control systems at Hewlett-Packard and KUKA robotics, where he specialized in sensor feedback applications. He also spent time at Lynx Software Technologies, where he focused on real-time embedded software for aerospace and military markets. John works on the Professional Services team at RTI, helping customers successfully integrate and deploy their DDS-based systems.

John Patchin is a Senior Applications Engineer for Real-Time Innovations (RTI). He received his BS and MS in Mechanical Engineering from the University of Kansas. John has over 25 years of experience in industrial automation, embedded software development and networking. Prior to RTI, he developed machine tool and robot control systems at Hewlett-Packard and KUKA robotics, where he specialized in sensor feedback applications. He also spent time at Lynx Software Technologies, where he focused on real-time embedded software for aerospace and military markets. John works on the Professional Services team at RTI, helping customers successfully integrate and deploy their DDS-based systems.

Posts by Tag

- Developers/Engineer (185)

- Technology (81)

- Connext Suite (77)

- News & Events (77)

- Aerospace & Defense (57)

- Standards & Consortia (51)

- Automotive (40)

- IIoT (27)

- 2025 (25)

- Leadership (24)

- Healthcare (23)

- 2024 (22)

- Connectivity Technology (22)

- Cybersecurity (20)

- Culture & Careers (15)

- Military Avionics (15)

- 2026 (14)

- FACE (13)

- ROS 2 (11)

- Connext Pro (10)

- JADC2 (10)

- AI (9)

- Connext Tools (8)

- Connext (7)

- Connext Micro (6)

- Databus (6)

- Transportation (5)

- Case + Code (4)

- Connext Cert (4)

- Energy Systems (4)

- Real-Time Data Streaming (4)

- Robotics (4)

- Golden Dome (3)

- Oil & Gas (3)

- RTI Labs (3)

- Research (3)

- Connext Conference (2)

- Edge Computing (2)

- MDO (2)

- MS&T (2)

- TSN (2)

- ABMS (1)

- DOD (1)

- ISO 26262 (1)

- MOSA (1)

- Simulation (1)

- Teaming (1)

- UAM (1)

- eVTOL (1)

Success-Plan Services

Success-Plan Services