5 min read

Parallel Trajectories: Behind the Scenes of the Indy Autonomous Challenge

A guest blog by Andrew Saba, Chief Engineer for the MIT-PITT-RW team and master's student at Carnegie Mellon University

I've been thinking a lot about trajectories lately, both in the sense of autonomous racing and my university education. I feel very fortunate that my path through school has led me to not only participate in the Indy Autonomous Challenge (IAC), but also to serve as chief engineer for the MIT-PITT-RW (MPRW)* autonomous racing team, led by co-captains Cindy Heredia and Rotimi Adetunji. Together, we spearheaded the evolution of the team to where it is today.

Much like the parts of a race car, our team is made up of top-grade components, each one fulfilling a critical role and none more important than any other. It has been the experience of a lifetime, and we're all still just getting started. I'd like to share our journey with you.

The Indy Autonomous Challenge is a series of challenges where university teams from around the world compete to build, program and race fully autonomous Dallara AV-21s on world-famous race tracks at speeds in excess of 180 mph. At the start, it might have seemed as if we were up against the ropes in this fight. As the only student-led team, we were also juggling our full-time undergrad workload of classes and projects, while competing against more experienced and well-funded teams of graduate students who were working full-time in this challenge. Yet oddly enough, this actually wound up becoming our unique advantage – there was a passion in what we were doing…we were like the idealistic founders of a maverick startup company.

At first, we delineated responsibilities for each major vertical/task/function – Perception and Tracking, for example – to one of the four universities. But we quickly realized that there was an issue of design confidence among the new team members. Even though the Dallara AV-21 is very expensive, we also had to be okay with failure, including potential crashes. Failures show the pathway to success, and the uncertainty they bring can be mitigated by experience.

Our solution was to enable a highly-matrixed type of workflow, to help build confidence and let team members work in areas they were most passionate about. We reorganized the team to ensure all verticals were represented at each school.

Working on one project with many moving parts across four universities required an extra effort to share information, so we decided to:

- Enact a 'Buddy System' for new members, who are each assigned a mentor with team experience.

- Use Zoom during work sessions, for open discussions and Q&A with mentors.

- Use Slack for ongoing questions and ideas, which also served as a written resource for easy review.

- Hold weekly check-in calls to encourage cohesion within and between the vertical groups.

The team grew organically into a very flat organizational structure. As I mentioned, Rotimi Adetunji (University of Pittsburgh/Cornell) and Cindy Heredia (MIT) became the co-captains, while I served as chief engineer. This meant there was no one single thought leader – great ideas came from everywhere in the team. I have special praise for Rotimi and Cindy, and their predecessors, Nayana Survana and Andrew Tresansky, the team’s first captains, who did much of the heavy lifting to ensure these ideas kept moving forward.

Our first racing event was a little rough, but every problem showed us the path to success. This brought us from an uncertain, slow-rolling vehicle that didn’t qualify, to our latest race, where we achieved speeds of over 150 MPH while passing another vehicle. Let's look back at how that happened.

The race cars were delivered to the teams with identical hardware configurations and pre-installed software based on Linux and ROS 2. The teams were responsible for creating and installing the self-driving software. The car has a LOT of sensors (6 cameras, 3 lidar, 4 radar, GNSS, IMU, and many vehicle and engine sensors), but it doesn't have unlimited CPU processing power, so we had to make the software run as fast as it could. This was especially true with the perception system, where the car needs to perceive the real world through its sensors as quickly as possible. Every millisecond we can save here translates directly to the car seeing things sooner and having more time to plan its actions.

Shaving time off the communications is where RTI ConnextⓇ became so valuable. The race car (which we affectionately named “Betty” in honor of Betty White) was delivered in an identical configuration to all teams. It was pre-installed with ROS 2 and an open-source DDS as its middleware layer. We only had a few months to get our racing software working in the vehicle and quickly discovered that we were seeing exceptionally long latency in the vehicle that we had not seen with our software during the earlier simulator-based phases of the challenge.

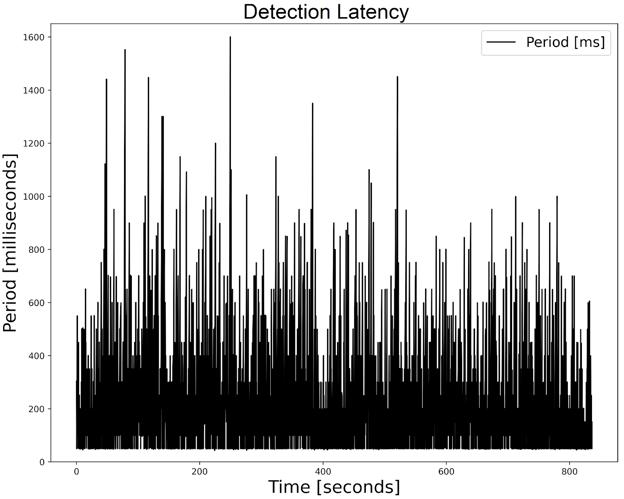

Our analysis revealed that most of the long latencies were happening in the ROS 2 and middleware layers beneath our software, sometimes resulting in delays in perception of more than 1 second:

Figure 1: LiDAR-to-Perception latency of our IAC qualifying systems using ROS 2

The lidar sensors in the car run at a 20 Hz frame rate, which gives us less than 1/20th of a second (50 milliseconds) to transport and process the lidar data into usable information. Clearly, we had some work to do to make the car run faster.

For this we reached out to RTI, who had ready-made solutions to these kinds of issues. Our most urgent task was to reduce the latencies in the perception path to be under the 50 mS lidar frame period. These were averaging over 100 milliseconds using ROS 2 and its default open source DDS middleware.

The first phase involved replacing the (3) ROS 2 lidar device driver software instances with a single instance of an equivalent driver built on RTI Connext. This new driver software could handle all 3 lidar sensors at once while running 4x faster than the ROS 2 drivers, and it required no changes to the rest of the system. It was kind of a no-brainer to use this.

The second phase was to modify our perception application (which was a ROS 2 Python application) to use the new RTI Python API for the Connext software, effectively removing the ROS 2 stack from this application while retaining compatibility with ROS 2 and the rest of our system. This effort took less than a day, but brought the biggest reductions in latency. It was surprisingly easy to get this working.

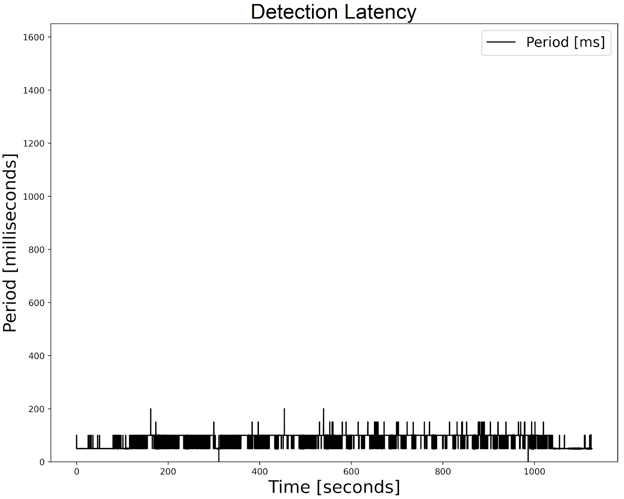

Figure 2: The dramatically reduced perception/detection latency of our system running RTI Connext in lieu of ROS 2.

The end result is that we’re now consistently under the 50 mS deadline for lidar perception, with a worst-case latency of 47 mS and averaging under 40 mS. As an added bonus, our processor has more time to think about other things – like winning races. We’re planning to repeat this success on other parts of the vehicle, using Connext to replace the ROS 2 components in the critical paths of the vehicle, and freeing-up valuable processing time to do more path planning and ‘what-if’ scenario calculations.

Documenting our journey would be incomplete if I didn’t mention this team’s resiliency. There were definite bumps in the road, which taught us a lot. Yet every time something didn’t go as planned, the team rallied and put those learnings to work. Take, for example, our most recent race during the CES conference in Las Vegas. Three days before the race, another car crashed into ours during a training run, resulting in two lost days of repairs. On race day, we achieved our highest-ever speed of 154 MPH and were leading the round, when the car unexpectedly stopped. Turns out, it had run out of fuel, as the higher speeds caused increased fuel consumption -- something we would have learned about and accommodated for, had we not lost those two days. It was a tough loss, but we rallied together and used it to recover, learn, improve, refine and get back on the right trajectory.

Our team and our vehicle have come so far. It’s an evolving process, as new team members come on board and some of our senior members graduate and move on. Next up is our first race in Europe, taking place June 16 - 18 at the famed Monza racetrack. We’re looking forward to putting our latest work and learnings to the test as we focus on breaking our current track record. There undoubtedly will be some bumps along the way, but we’re confident that our car is getting faster and more competitive. Our trajectory is gaining speed, our car is ready -- and so are we.

Editor's Note: To learn how Connext can dramatically reduce latency in ROS 2 environments, please click here. To see the MIT-PITT-RW team in action, watch this video.

*The four university teams are Massachusetts Institute of Technology (MIT); University of Pittsburgh (PITT), Rochester Institute of Technology (RIT); and the University of Waterloo (Waterloo). See our website and follow us on LinkedIn.

About the Author:

Andrew Saba is the Chief Engineer for the MIT-PITT-RW team, participating in the Indy Autonomous Challenge.

Andrew Saba is the Chief Engineer for the MIT-PITT-RW team, participating in the Indy Autonomous Challenge.

Andrew graduated from the University of Pittsburgh in December, 2019 and is currently finishing his Master’s degree in Robotics at Carnegie Mellon University.

In his free time, Andrew enjoys hiking and rock climbing. Andrew also loves spending time with his friends and family, especially his sister and brother-in-law, all of whom supported him over the last 3 years.

Posts by Tag

- Developers/Engineer (186)

- Technology (81)

- News & Events (78)

- Connext Suite (77)

- Aerospace & Defense (57)

- Standards & Consortia (51)

- Automotive (40)

- IIoT (27)

- 2025 (25)

- Leadership (24)

- Healthcare (23)

- 2024 (22)

- Connectivity Technology (22)

- Cybersecurity (20)

- 2026 (16)

- Culture & Careers (15)

- Military Avionics (15)

- FACE (13)

- ROS 2 (11)

- AI (10)

- Connext Pro (10)

- JADC2 (10)

- Connext Tools (8)

- Connext (7)

- Connext Micro (6)

- Databus (6)

- Real-Time Data Streaming (5)

- Transportation (5)

- Case + Code (4)

- Connext Cert (4)

- Energy Systems (4)

- Robotics (4)

- Golden Dome (3)

- Oil & Gas (3)

- RTI Labs (3)

- Research (3)

- Connext Conference (2)

- Edge Computing (2)

- MDO (2)

- MS&T (2)

- TSN (2)

- ABMS (1)

- DOD (1)

- ISO 26262 (1)

- MOSA (1)

- Simulation (1)

- Teaming (1)

- UAM (1)

- eVTOL (1)

Success-Plan Services

Success-Plan Services